3D & Video Production Performance

Directional, production-aligned expectations for 3D rendering, simulation, and professional video workflows. Customize the tables per product configuration.

CPU: 9995WX

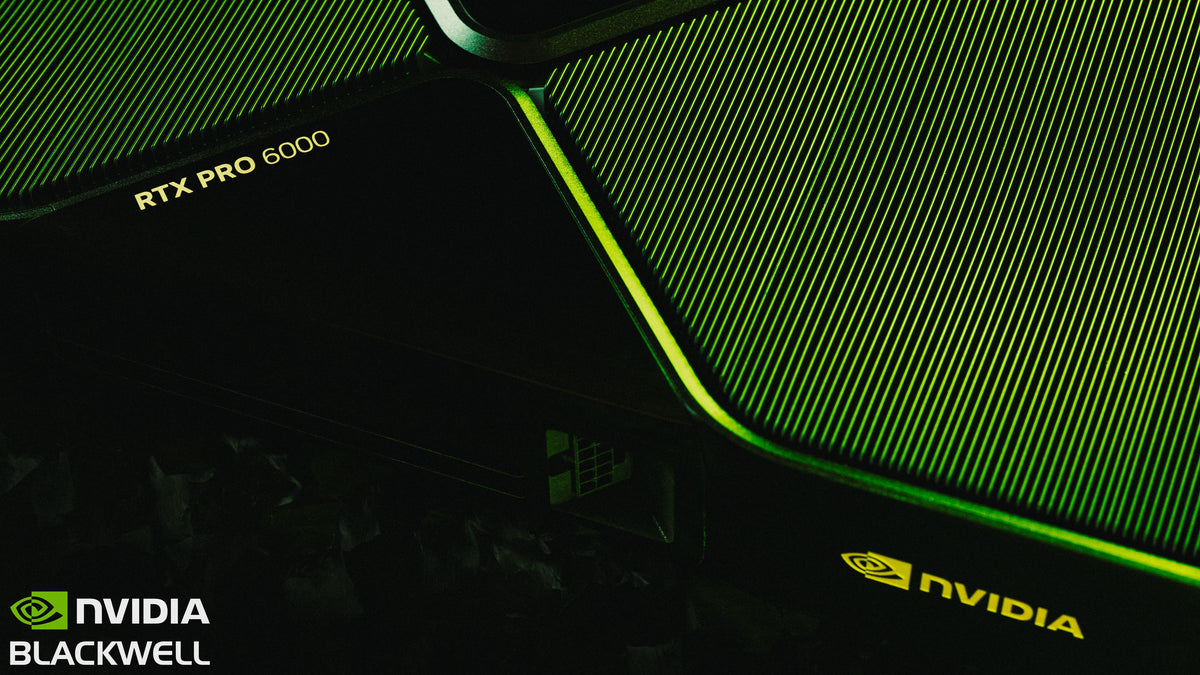

GPU: Dual RTX Pro 6000

Memory: Up to 512GB

Storage: Up to 10TB NVMe

| Software |

Workload |

Expected performance class |

| Blender (Cycles) |

GPU path tracing / final-frame rendering |

✅ Designed to handle complex scenes; performance varies by scene fit and settings |

| Cinema 4D + Redshift |

GPU rendering + lookdev |

✅ Strong RTX throughput; scaling and results depend on scene/renderer |

| OctaneRender |

Photoreal path tracing |

✅ Built for heavy RTX workloads; multi-GPU behavior depends on your scenes |

| Unreal Engine 5 |

Nanite + Lumen viewport |

✅ Designed for large projects; GPU + RAM help complex assets and editor responsiveness |

Use these as directional expectations. Actual performance varies by renderer settings, scene fit in VRAM, and effects complexity.

Legend

🚀 = Excellent / best-in-class

✅ = Supported / recommended

⚠️ = Possible with constraints

| Software |

Workflow |

Typical workflows supported |

| DaVinci Resolve |

4K–8K editing + grading (GPU accelerated) |

✅ Built for GPU-accelerated editing and effects; codec/effects dependent |

| Adobe Premiere Pro |

Multi-stream 4K editing + GPU effects |

✅ Excellent workflow support; depends on codecs and effects stack |

| After Effects |

Large comps + RAM-heavy projects |

✅ Designed to handle large comps with heavy caching (project dependent) |

Results depend on codec, effects stack, GPU acceleration settings, and storage throughput.

| Resource |

Benefit |

| 192GB RAM |

Massive scenes, large After Effects comps |

| Up to 10TB NVMe storage |

Fast project and asset loading |

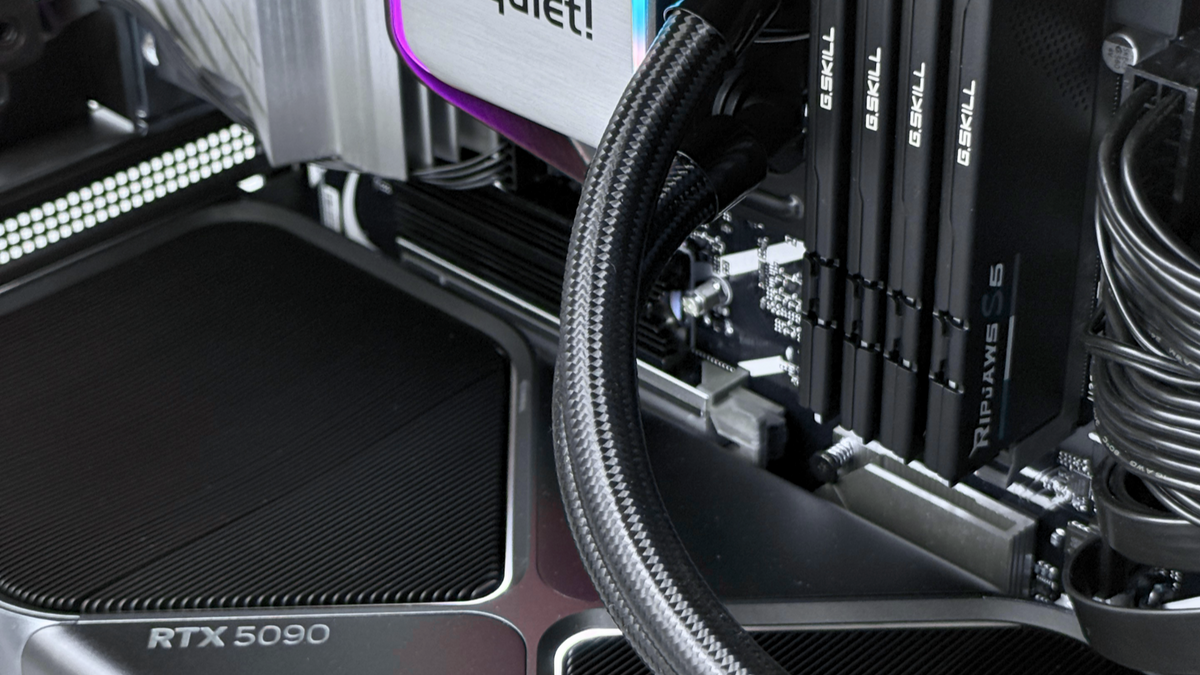

| RTX 5090 / RTX Pro 6000 VRAM |

Large textures, heavy scenes |

| Ryzen 9950X3D |

High viewport and CPU simulation performance |

Designed to handle large textures, heavy scenes, and RAM-intensive comps—especially when projects stay on fast NVMe storage.

| System |

Relative render speed |

| Typical RTX 5080 workstation |

1× |

| Single RTX 5090 or RTX Pro 6000 workstation |

~1.2–1.4× |

| Dual high-end GPU workstation (same class) |

~2.0–2.6× |

Directional comparison for GPU-rendered workloads. Actual results vary by renderer support, scene fit, and multi-GPU scaling behavior.

Note: All guidance is directional. Actual results vary by scene complexity, codecs, effects, renderer settings, and software versions.